|

8/28/2023 0 Comments Pytorch nn sequential call

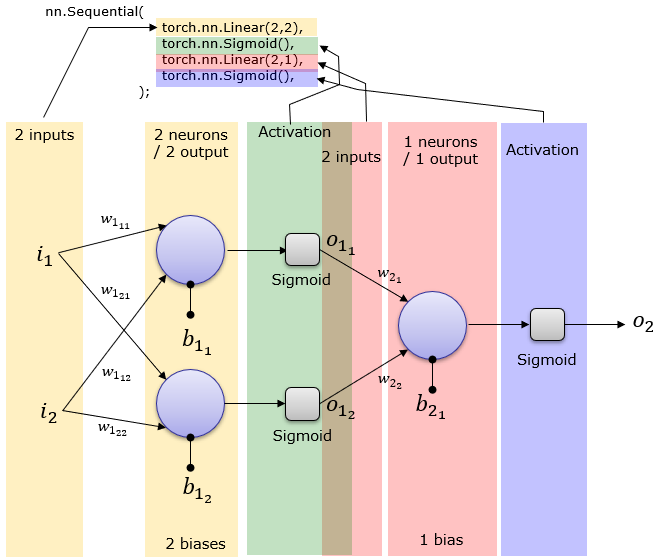

How loss.backward(), optimizer.step() and optimizer. Using PyTorch nn.What does optimizer.step() and scheduler.step() do? Building a Feedforward Neural Network with PyTorch Step 1: Loading MNIST Train Dataset Step 2: Make Dataset Iterable Step 3: Create Model Class Step 4.We’ll use the class method to create our neural network since it gives more control over data flow. using the Sequential () method or using the class method. PyTorch Confusion Matrix for multi-class image classification There are 2 ways we can create neural networks in PyTorch i.e.Load custom Dataset in PyTorch 2.0 using Datapipe and DataLoader2.Create your own Custom Iterable DataPipe for Image Dataset.In this case, we’re not going to evaluate or predict the train network because we’re just interested in seeing how to create a sequential model and train it and access the class and then create an instance of the class by passing in sequentially any number of neural network modules. layerlist for i in layers: layerlist.append(nn.Linear(nin, i)) nin input neurons connected to i number of output neurons layerlist.append(nn.ReLU(inplaceTrue)) Apply activation function - ReLU layerlist.append(nn.BatchNorm1d(i)) Apply batch normalization layerlist.append(nn.Dropout(p)) Apply dropout to prevent overfitting nin i Reassign number of input neurons as the. You can do this whether youre building Sequential models, Functional API models, or. Note that this pattern does not prevent you from building models with the Functional API.

You will then be able to call fit () as usual - and it will be running your own learning algorithm. Print('Epoch:'.format(epoch,train_loss,valid_loss)) This is the function that is called by fit () for every batch of data.

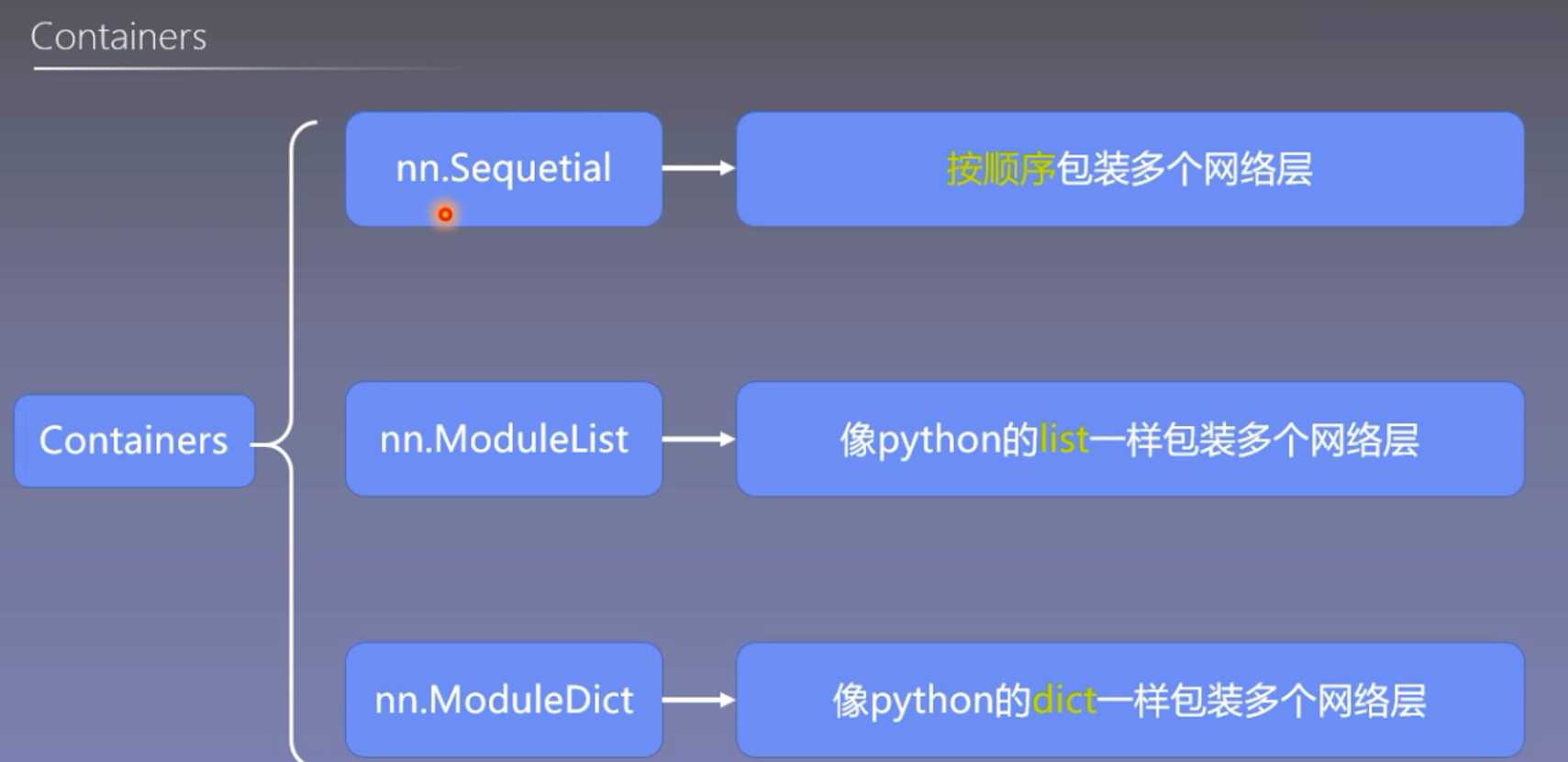

It provides self-study tutorials with working code. Kick-start your project with my book Deep Learning with PyTorch. In this post, you will discover the simple components you can use to create neural networks and simple deep learning models in PyTorch. Valid_loss=valid_loss/len(test_ds_loader.sampler) In its simplest form, multilayer perceptrons are a sequence of layers connected in tandem. Train_loss=train_loss/len(train_ds_loader.sampler) Sequential class torch.nn.Sequential(args: Module) source class torch.nn.Sequential(arg: OrderedDictstr, Module) A sequential container.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed